There’s a moment early on when you realize that “doing research” and “doing marketing research” are completely different things. You think you’re just going to gather some data, run a few surveys, maybe pull some numbers together — and then suddenly you’re staring at a blank page trying to figure out what question you were even supposed to be answering.

If you’re looking to learn the marketing research process, the honest answer is that it’s not a single skill — it’s a sequence of decisions, each one constraining what’s possible in the next. Get the problem definition wrong and every insight downstream is built on a crack. Understand the sequence and you don’t just collect better data — you become the person in the room who actually knows what the data means.

- The research design you choose before data collection determines 80% of the quality of your final insights

- Most beginners spend too long gathering data and too little time defining the right problem

- The difference between useful research and decorative research is whether it changes a decision

What Marketing Research Actually Means

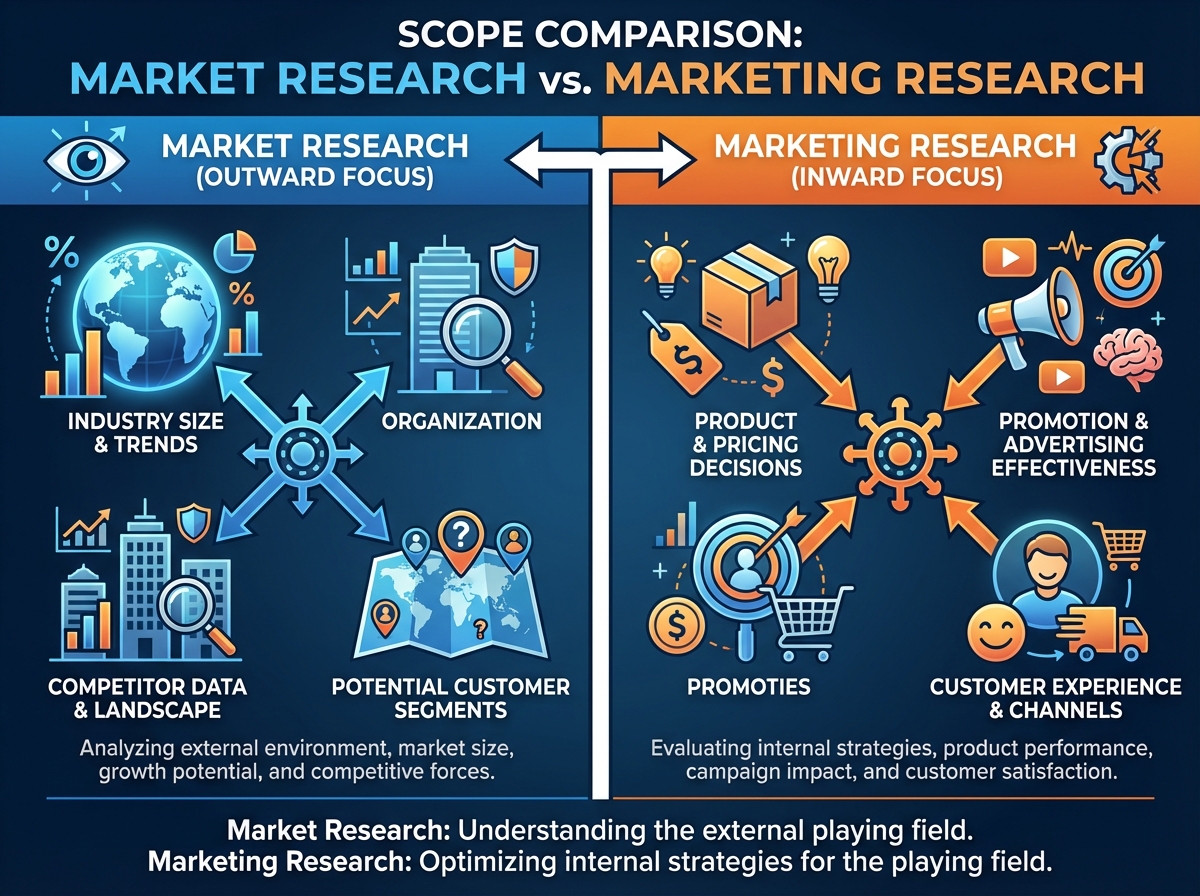

Marketing research is the systematic process of collecting, processing, and analyzing data to give decision-makers the information they need — at the right time, in the right form. It’s not market research (which looks outward at industry size and trends). Marketing research is more tightly coupled to specific decisions: Should we reposition this product? Which message resonates with segment B? Why is retention dropping in this cohort?

For someone coming in from a general marketing background, this distinction matters immediately. You’re not building a database of interesting facts. You’re solving a specific problem that someone with budget authority needs answered.

| Term | Focus | Example Question |

|---|---|---|

| Market Research | Industry-level trends | How big is the plant-based food market? |

| Marketing Research | Decision-specific insights | Which of these two taglines drives more trial intent? |

| Consumer Research | Behavioral and attitudinal data | Why do users abandon the app after day 3? |

Three things that separate marketing research from casual data gathering:

- It starts with a clearly defined decision, not a vague curiosity

- It uses a chosen methodology before a single data point is collected

- Its output is a recommendation, not just a report

Where the History of Marketing Research Actually Matters

Most people skip the history section. That’s a mistake. Understanding how marketing research evolved from early mass media models through fragmented digital channels gives you something practical: it explains why the field has so many competing frameworks, and why research design has to adapt to context rather than follow a fixed script.

Conventional mass media marketing operated on reach and frequency assumptions — you broadcast, you measure sales lift, you repeat. The research methods that grew up around that era (recall studies, aided awareness surveys) made sense for one-to-many communication. When marketing fragmented into dozens of digital touchpoints, those methods didn’t disappear — they just became one tool among many, often misapplied.

The emergence of internet-based business models didn’t just add new channels. It changed the relationship between sales and marketing from a handoff model into a continuum — where research now has to account for the full customer journey, not just the awareness-to-purchase window. If you understand this evolution, you stop treating research as an isolated project and start treating it as an ongoing input into strategy.

The Stage Everyone Gets Wrong: Defining the Problem

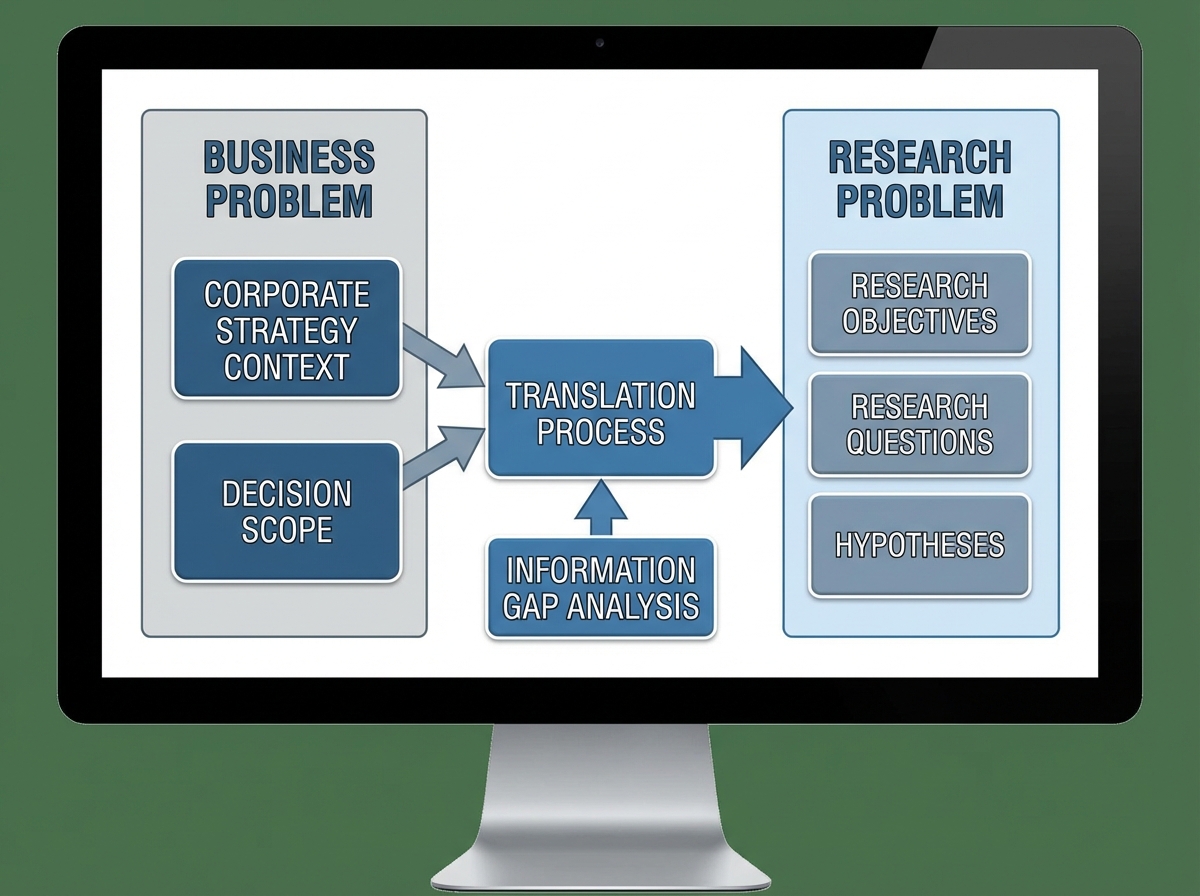

The single biggest mistake people make when learning marketing research is jumping to data collection before the research problem is fully defined. It feels productive to be out there gathering information. It feels abstract and slow to sit in a room arguing about what question you’re actually trying to answer. But every hour spent sharpening the problem definition saves three hours of analysis on the back end.

Defining the research problem isn’t just writing a sentence about what you want to know. It requires inputs: understanding the corporate strategy context, knowing which decisions are actually on the table, and identifying what information would actually change those decisions. You need to understand the business problem first — then translate it into a research problem. These are not the same thing. “Our sales are declining” is a business problem. “We need to understand whether declining sales are driven by awareness loss or consideration loss among 25–34 year old urban buyers” is a research problem.

The tools at this stage — situational analysis, symptom mapping, decision trees — exist to force precision. When you use them seriously, the research problem that emerges is often narrower than what the stakeholder originally asked for. That narrowing isn’t scope reduction. It’s how you avoid spending three months producing a 60-page report that answers a question nobody was actually deciding on.

Choosing a Research Design Before You Touch Any Data

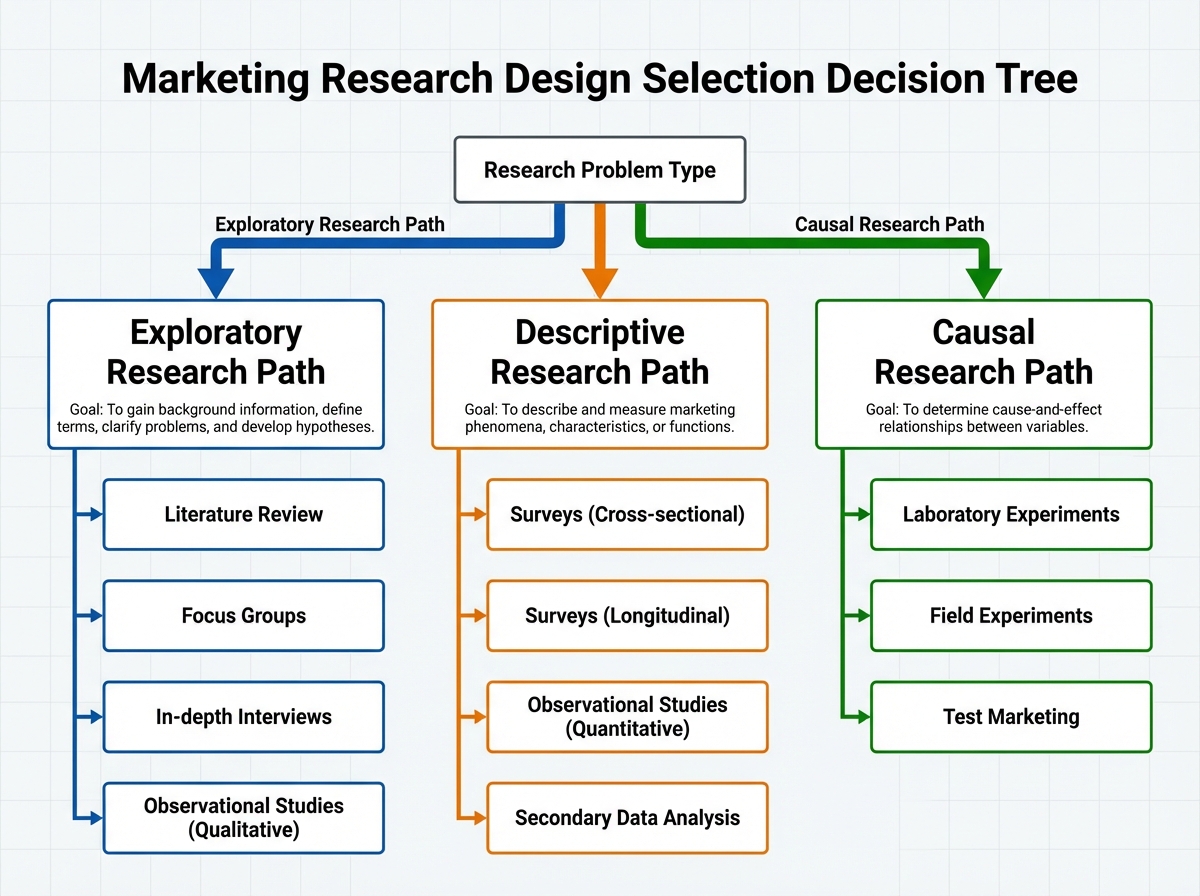

Once the problem is defined, you face the most consequential methodological decision in the entire process: exploratory, descriptive, or causal? Each research design type exists for a different type of question, and using the wrong one is like using a ruler to measure temperature — technically an activity, but not useful.

Exploratory research is what you do when you don’t know enough to ask structured questions yet. It’s interviews, ethnography, open-ended surveys — methods that generate hypotheses rather than test them. Descriptive research tells you what is happening: surveys, observational studies, secondary data analysis. Causal research — experiments and quasi-experiments — tells you why. The mistake most beginners make here is reaching for descriptive methods (surveys are familiar) when the problem actually demands exploratory methods (you don’t know enough yet to write good survey questions).

The inputs into design selection matter: what data already exists, what timeline the decision requires, what budget is available, and what level of confidence the decision actually needs. A low-stakes tactical decision doesn’t need a randomized controlled experiment. A major product repositioning does. Research design is fundamentally about matching rigor to stakes — and knowing the difference between the two.

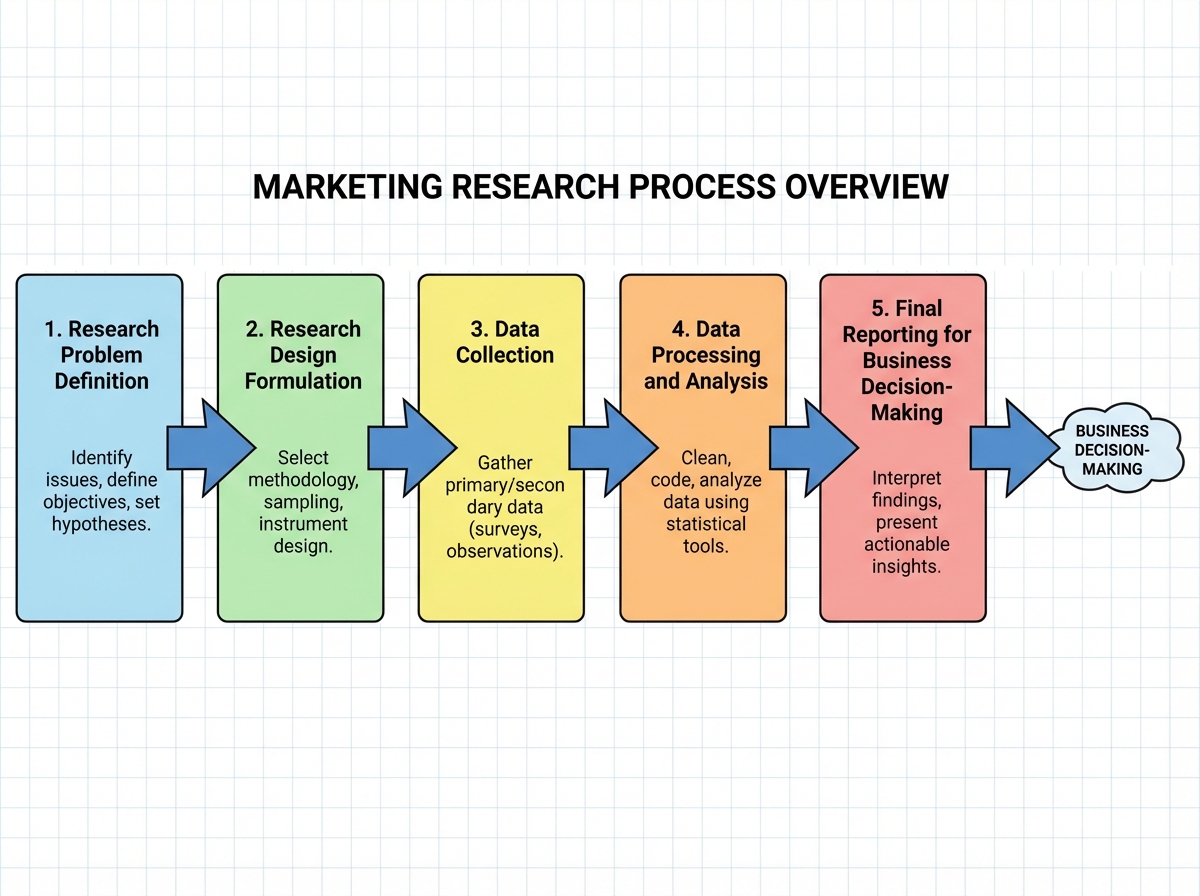

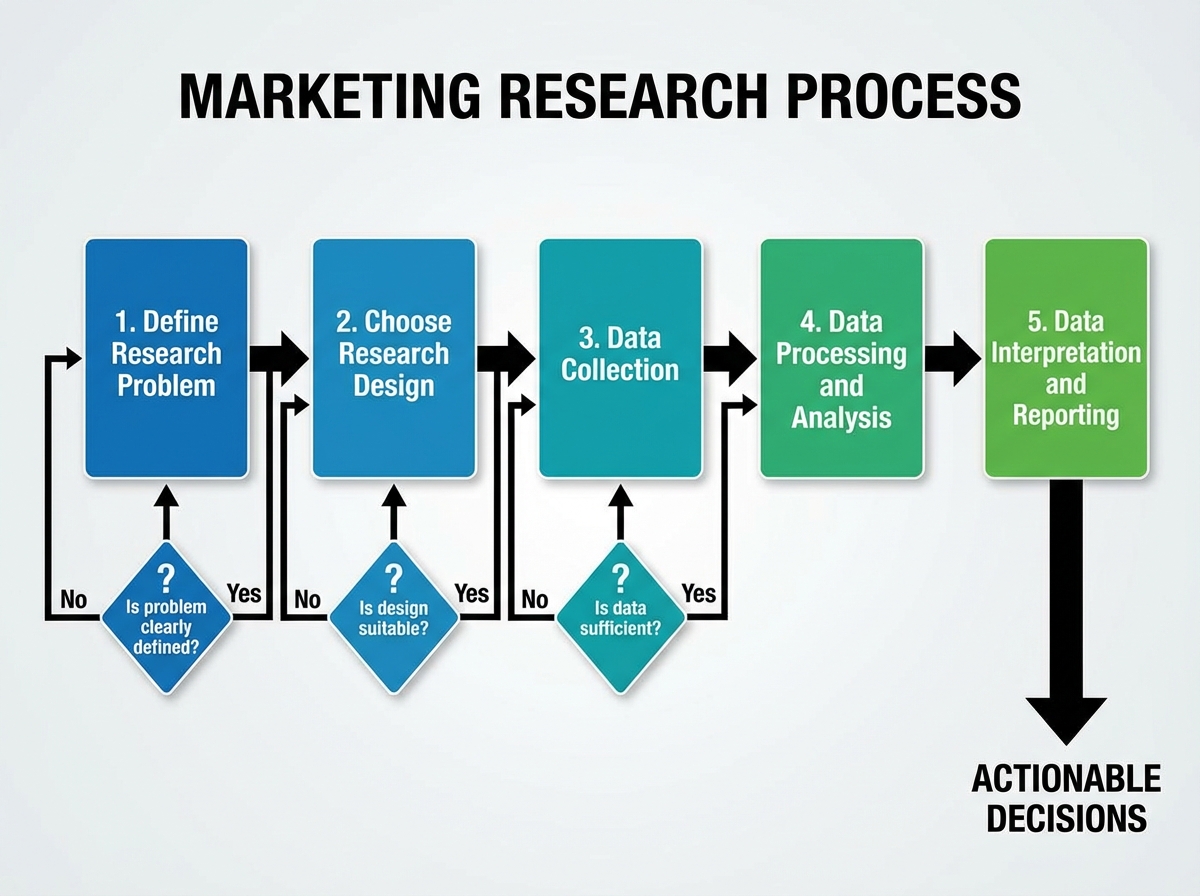

The Marketing Research Process, Stage by Stage

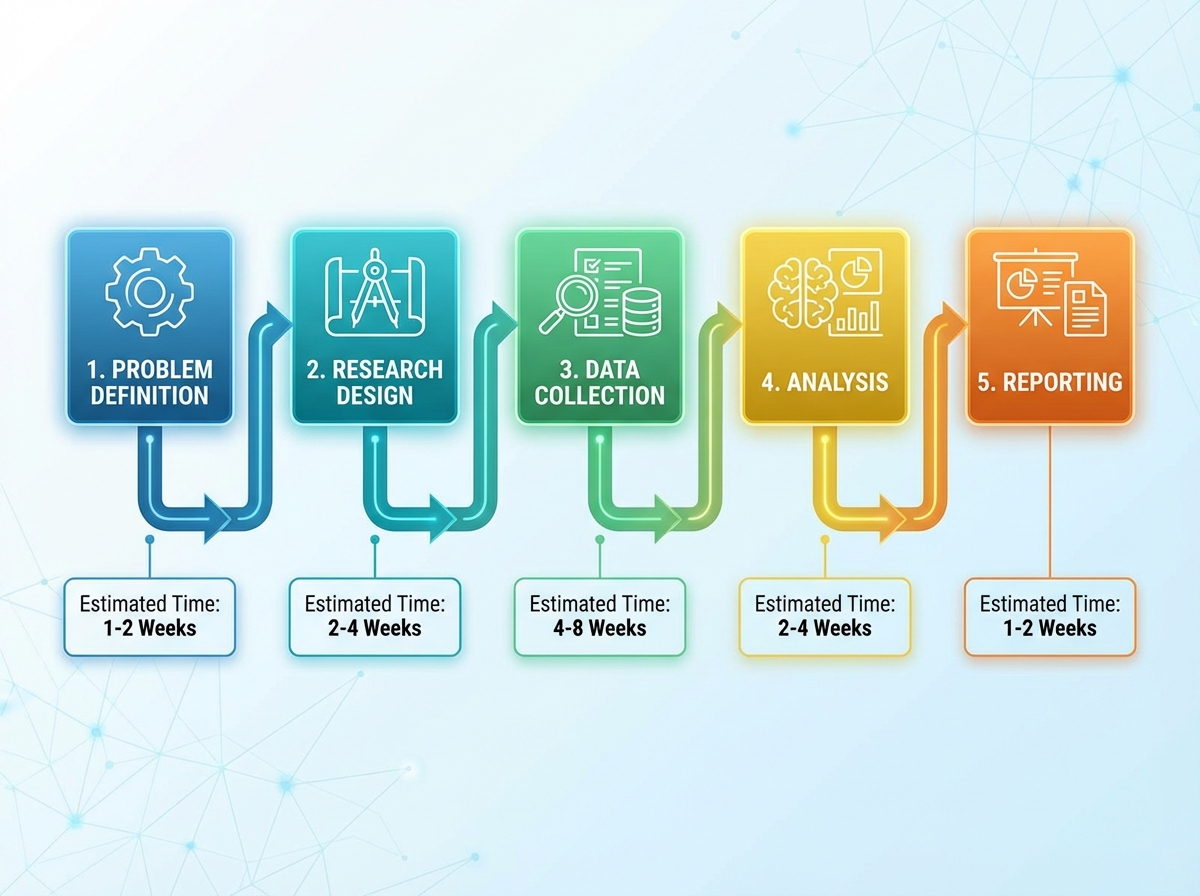

Once you’ve internalized the logic behind the first two stages, the rest of the process becomes more intuitive — not easier, but more navigable. Here’s how the full sequence plays out in real terms:

| Stage | What You’re Actually Doing | Time Estimate |

|---|---|---|

| Define the research problem | Translate business question to research question | 1–2 weeks |

| Choose research design | Match methodology to problem type and decision stakes | 3–5 days |

| Data collection | Execute fieldwork — surveys, interviews, secondary data | 1–3 weeks |

| Data processing and analysis | Clean, code, and analyze for patterns | 1–2 weeks |

| Data interpretation and reporting | Translate findings into actionable recommendations | 3–5 days |

| Total | Full research project cycle | 4–8 weeks |

Order matters more than speed here — compressing the early stages to save time almost always means redoing them. And if you’re moving slower than this table suggests, that’s not failure; most real projects run longer once stakeholder alignment and revision cycles are factored in.

Data Collection Is Where Theory Meets Reality

You can design a perfect study on paper and have it fall apart during fieldwork. Response rates are lower than expected. Interview participants give socially acceptable answers instead of honest ones. Secondary data sources turn out to be two years old. Data collection is where every assumption in your research design gets tested by contact with reality.

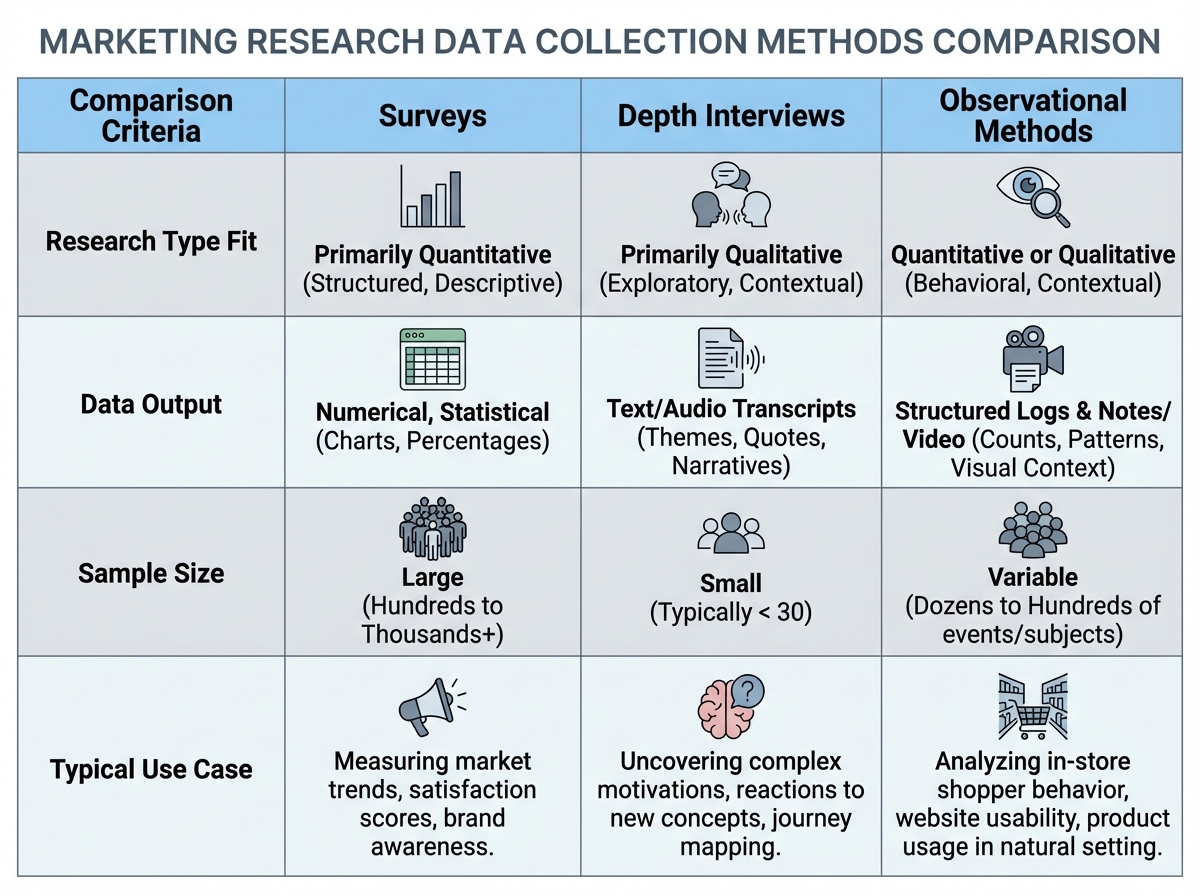

The discipline here is knowing which data collection method fits the research design you chose — not the one you’re most comfortable with. Surveys are high-volume and structured; they work for descriptive research with clear variables. Depth interviews are slow and qualitative; they work for exploratory research where the goal is to surface new dimensions. Observational methods (watching how people actually behave in a retail environment, for example) capture behavior that self-report methods systematically miss. The tendency to default to surveys regardless of the research question is where a lot of marketing research loses validity quietly, without anyone noticing until the recommendations fail to land.

Processing and Analyzing Data Without Losing the Thread

Raw data is not an insight. This is the stage where most junior practitioners either over-interpret or under-interpret — either seeing patterns that aren’t there or reporting frequencies without drawing any conclusions at all. Data processing starts with cleaning: removing incomplete responses, recoding variables, checking for outliers that might be errors rather than real variance. None of this is glamorous, but skipping it produces analysis built on noise.

Analysis should always trace back to the research problem defined in Stage 1. If you defined the problem as “understanding whether awareness or consideration is driving declining sales in segment X,” your analysis should be structured around that question — not a general walkthrough of every variable in the dataset. The most useful analytical outputs are comparative: how does Segment A differ from Segment B on consideration intent? How does behavior change between first purchase and second purchase? What attributes are most strongly associated with repurchase intent? These questions were answerable only because the research design was structured to collect comparable data across those dimensions.

Interpretation and Reporting: The Stage That Decides Whether Research Gets Used

Here’s the uncomfortable truth about research reporting: most research reports never change a single decision. They get read, nodded at, and filed. The reason is almost always that the report presents findings without recommendations — it describes what was observed without saying what should be done differently as a result.

Interpretation means translating patterns in data into implications for specific decisions. It requires going back to the original research problem and asking: given what we found, what should the decision-maker do that they wouldn’t have done without this information? That question should structure the entire report. Findings come first only to establish credibility; recommendations come last but are what the report actually exists to deliver. If the person who commissioned the research could have made the same decision without reading your report, the research failed — even if the methodology was flawless.

How Marketing Research Connects to Everything Else in Strategy

Marketing research doesn’t sit inside a single phase of the planning cycle — it feeds every other aspect of marketing strategy. Segmentation decisions depend on research into who the customer actually is, not who the brand assumed they were. Positioning decisions depend on research into how the brand is currently perceived relative to competitors. Pricing decisions depend on research into willingness to pay and price sensitivity. Communication decisions depend on research into which messages resonate with which segments.

This is why learning marketing research as a standalone skill has a multiplier effect. Once you understand how to structure a research problem and choose an appropriate methodology, you can apply that logic across every area of marketing planning. The person who understands research design isn’t just a data collector — they become the person in the organization who asks the right questions before major decisions get made. That’s a different kind of value than execution skills, and it compounds over time.

Looking back on the process — from the confusion of an undefined problem to the clarity of a recommendation that actually lands — what stays with you isn’t the statistical techniques or the survey platforms. It’s the discipline of asking: what decision does this research need to support? Everything else follows from that.

Define the decision before the question. Before writing a single survey item, write down the decision that will be made differently based on what you learn. If you can’t name it, you’re not ready to collect data.

Separate the business problem from the research problem. Restate any business problem as an information gap — what you don’t know that, if you knew it, would change what you do.

Match your research design to the question type. Exploratory questions need qualitative methods. Confirmatory questions need quantitative ones. Using the wrong design produces confident answers to the wrong questions.

Audit your secondary data before designing primary research. Someone has often already collected data adjacent to what you need. Finding it first prevents duplicating work and helps you focus primary research on the genuine gaps.

Build your analysis structure before fieldwork begins. Sketch the tables and charts you expect to produce before you collect a single response. If you can’t sketch them, your research design is incomplete.

Write the recommendation before you write the findings. Draft a provisional recommendation based on your hypothesis, then treat research as a test of that draft. It keeps you focused on decision-relevant analysis rather than descriptive reporting.

Report implications, not just findings. For every key finding, write one sentence beginning with “This means that…” — and make that sentence reference a specific decision or action.

Test your report on someone outside the project. If they can’t tell you what decision the research supports after reading the executive summary, rewrite the executive summary.

Leave a Reply