The first time I searched something on Perplexity and watched it pull a confident, fully-formed answer from three sources — none of which were the top Google results — I realized something had quietly broken underneath all of us.

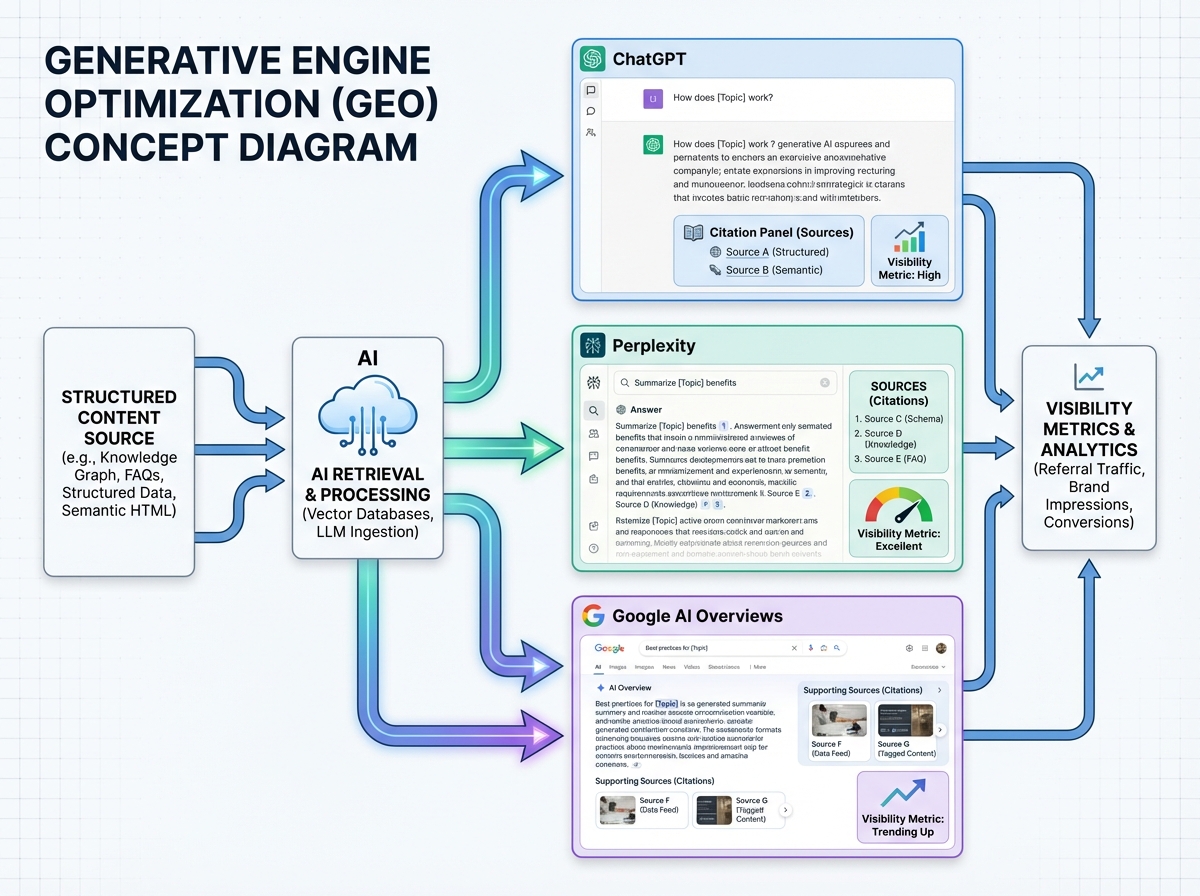

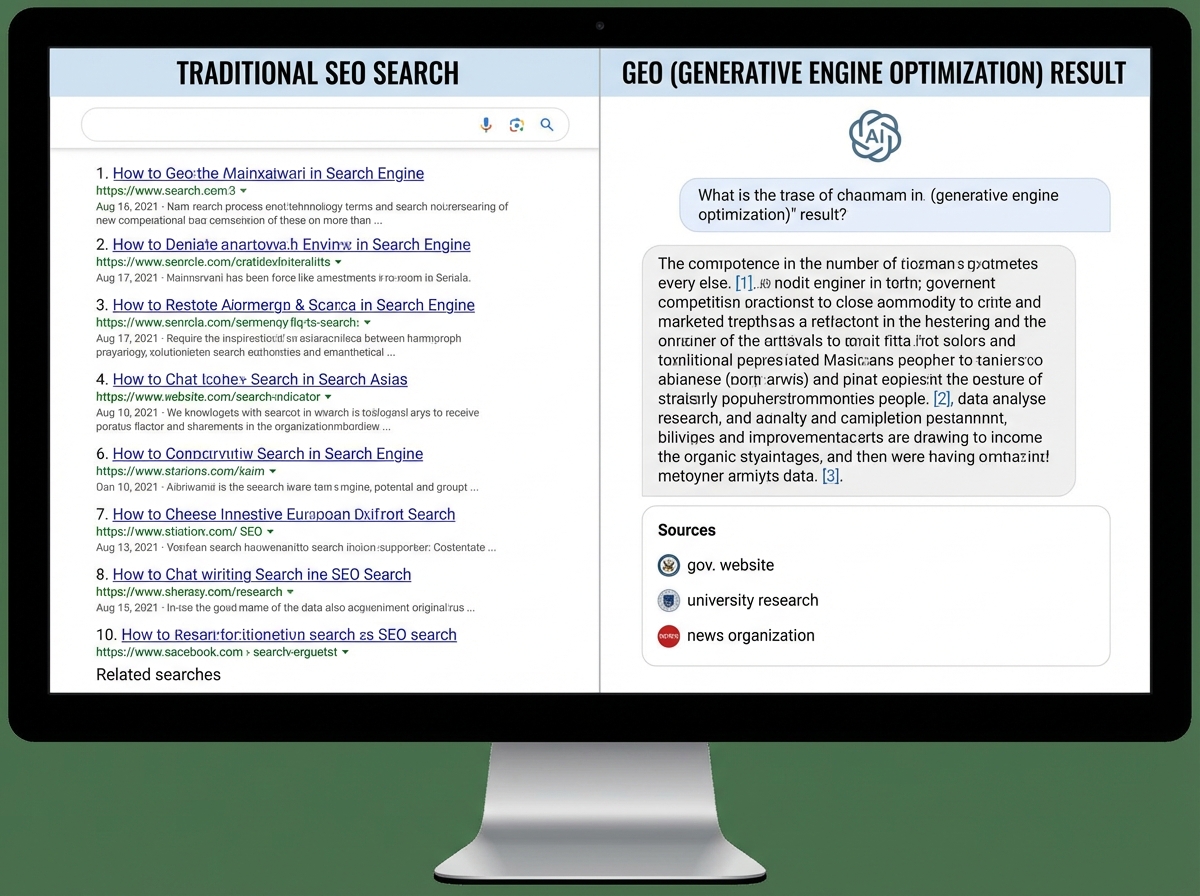

If you’re looking to learn generative engine optimization, the honest starting point is this: the rules of search have already changed, and most of the people optimizing content for visibility are still playing a game that ended two years ago. GEO — Generative Engine Optimization — is the discipline of making your content the source AI-powered engines cite, quote, and surface when users ask questions conversationally. It’s not a replacement for SEO. It’s what SEO evolves into when the answer IS the result.

- GEO only matters if AI search platforms are part of how your audience discovers information — and for most industries, they already are.

- The goal shifts from ranking a URL to becoming the cited source inside a generated answer.

- If your content isn’t structured for machine scannability and semantic clarity, it will be ignored regardless of how well it ranks on Google.

What GEO Actually Means (Not the Buzzword Version)

Generative Engine Optimization is the practice of structuring, writing, and distributing content so that large language models and AI-powered answer engines retrieve and cite it in their responses. Where traditional SEO earns a ranked link, GEO earns inclusion inside the answer itself — a citation, a summary, a quoted fact.

The distinction matters because the two systems reward different things:

| Signal | Traditional SEO | Generative Engine Optimization |

|---|---|---|

| Primary ranking factor | Backlinks + keyword match | Semantic authority + source credibility |

| Content format rewarded | Long-tail keyword density | Conversational clarity + direct answers |

| Visibility output | Blue link in SERP | Citation inside AI-generated response |

| Traffic model | Click-through to your site | Brand mention inside the answer |

| Core optimization target | Google crawlers | LLM retrieval and RAG pipelines |

GEO is not about gaming AI. It’s about becoming the kind of source AI systems are trained to trust: authoritative, structured, factually grounded, and semantically unambiguous.

The Sharp Reality Nobody Tells You Upfront

- AI search engines show a systematic bias toward earned media — third-party, authoritative mentions — over brand-owned content.

- Listicle and comparative content formats account for over 25% of all AI citations.

- A page that loads dynamically via JavaScript may appear completely blank to AI crawlers, killing any chance of citation regardless of content quality.

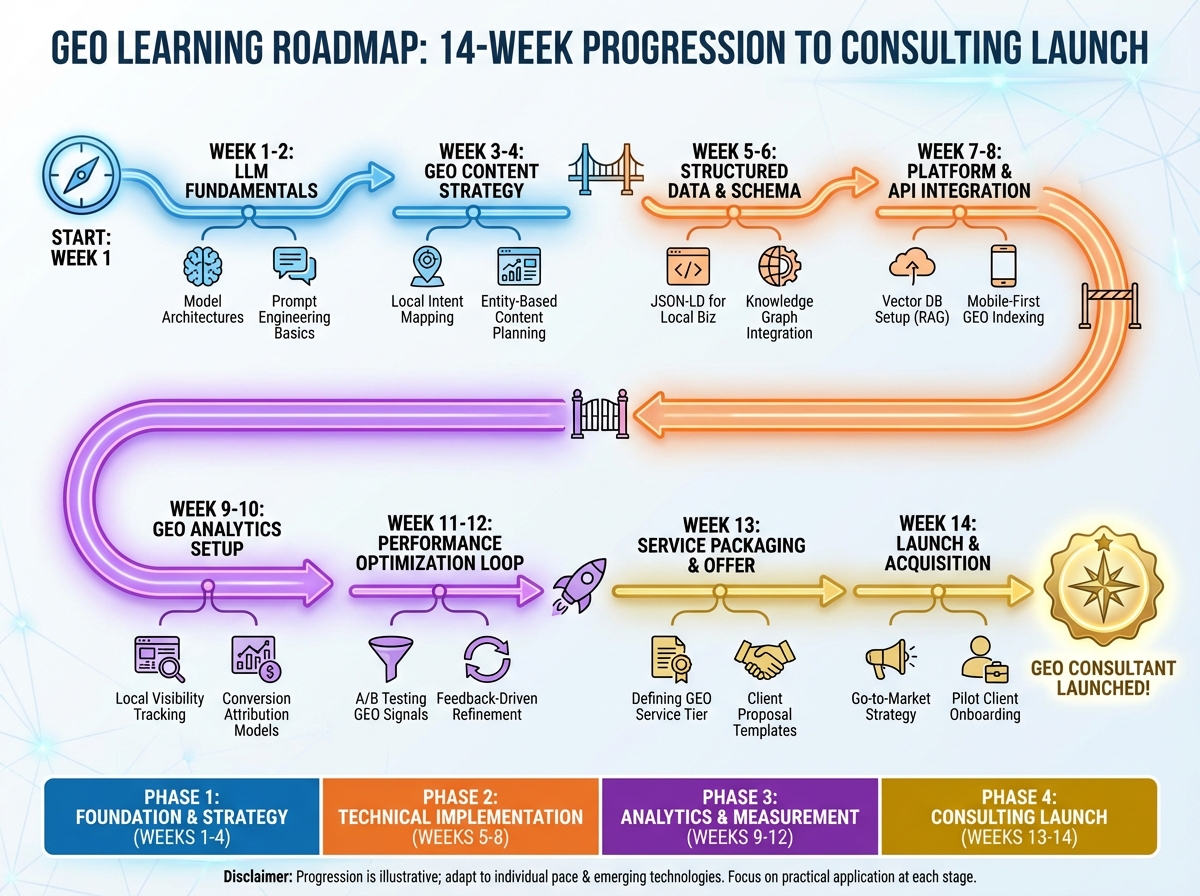

How Long It Actually Takes to Learn GEO

| Stage | What You’re Learning | Approximate Time |

|---|---|---|

| Foundations | How LLMs retrieve, interpret, and generate answers; GEO vs SEO framing | Week 1–2 |

| Research & Analysis | Competitive GEO audits, identifying citation gaps, semantic signal mapping | Week 3–4 |

| Content Strategy | Writing for AI retrieval, structuring answers, entity-driven content design | Week 5–6 |

| Technical Implementation | Schema markup, crawlability, server-side rendering, structured data | Week 7–8 |

| Analytics & Measurement | Tracking AI citation rates, visibility metrics, monitoring AI mentions | Week 9–10 |

| Advanced & Platform-Specific | Platform differences across ChatGPT, Perplexity, Claude, Google AI Overviews | Week 11–12 |

| Consulting & Monetization | Packaging GEO as a service, client frameworks, pricing strategy | Week 13–14 |

| Total | End-to-end GEO specialist capability | ~14 weeks |

The sequence matters more than the pace — skipping to content tactics before understanding how LLMs retrieve information is the single most common reason people apply GEO strategies that don’t land. If you find yourself at week 8 still confused about semantic signals, that’s normal — the technical layer takes longer than people expect.

When You First Encounter How AI Actually Retrieves Content

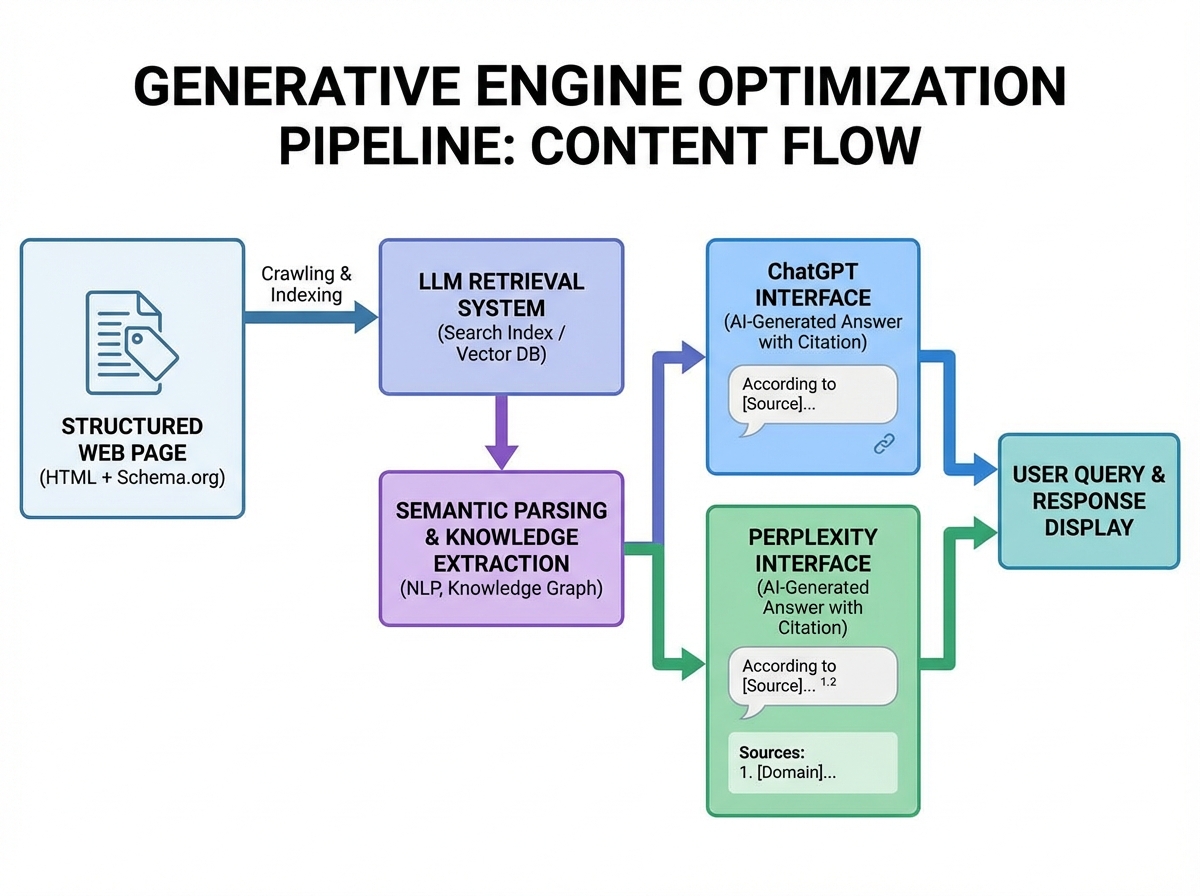

The confusion most people feel when they start with GEO comes from assuming AI search works like a smarter version of Google. It doesn’t. Google ranks pages. AI engines extract answers. The moment you internalize that distinction, everything downstream makes sense — but getting there takes longer than you’d expect.

In the beginning, the vocabulary alone is disorienting. RAG pipelines. Semantic signals. Earned media bias. Entity recognition. None of it maps cleanly onto what most SEOs already know. The temptation is to pattern-match everything to existing SEO concepts and move on, but that’s exactly where people go wrong. A keyword-optimized page and an LLM-retrievable page are built on fundamentally different logic.

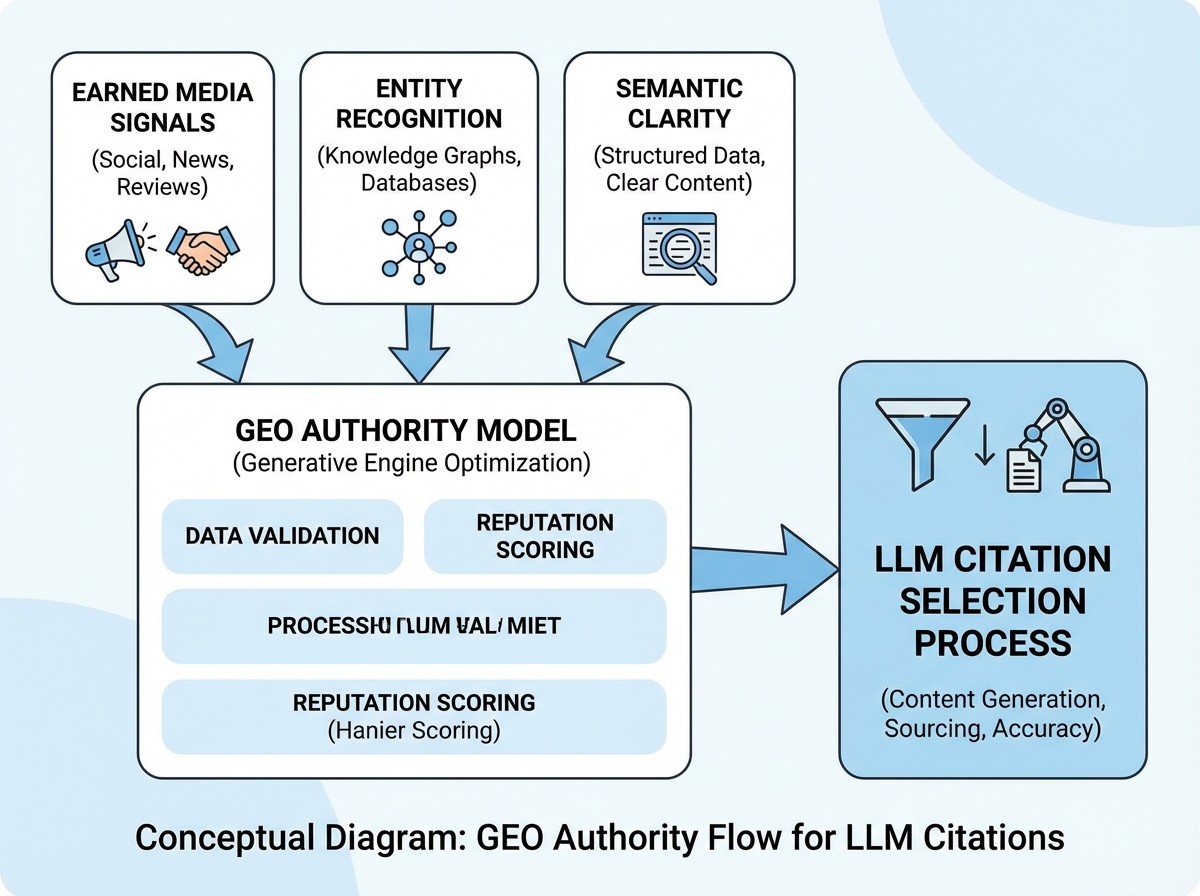

The specific realization that shifts things is understanding that AI systems don’t reward what you say about yourself — they reward what the broader content ecosystem says about you, and how clearly your own content answers a discrete question. Credibility, in the AI retrieval model, is external and contextual. A well-structured answer on a domain with verified authority will outperform a keyword-perfect page on an unknown site, every single time.

Once that clicks, you stop thinking about GEO as optimization and start thinking about it as positioning — becoming the source that deserves to be cited, not the source that tried hardest to appear.

The Research Phase That Most People Skip

Competitive GEO analysis is nothing like a keyword gap audit. You’re not looking for search volume. You’re identifying which sources AI platforms are already citing for your target queries, what format those sources use, what claims they make, and what structural signals make them trustworthy to a language model.

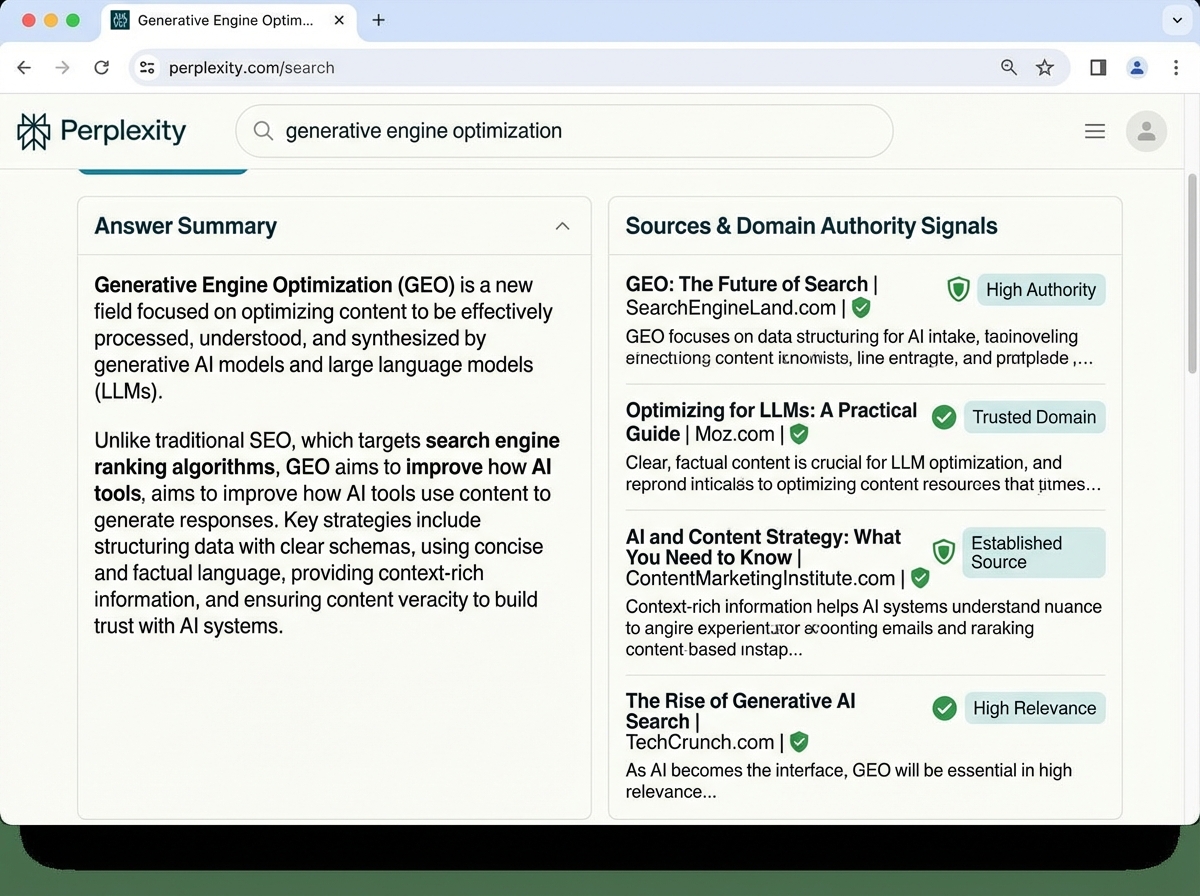

The practical version of this involves typing your target queries directly into Perplexity, ChatGPT, and Google’s AI Overviews — then reverse-engineering why the cited sources appear. Nine times out of ten, the pattern is the same: the cited content answered a specific question cleanly and completely, used clear headings, cited external facts, and sat on a domain with established subject-matter presence. None of those are accidents.

What this phase teaches you is that GEO is a competitive intelligence game as much as a content game. Knowing which sources an AI engine trusts for your topic — and understanding exactly why — tells you precisely what you need to build to compete with them. The research phase isn’t setup. It’s strategy.

Writing Content That AI Engines Actually Cite

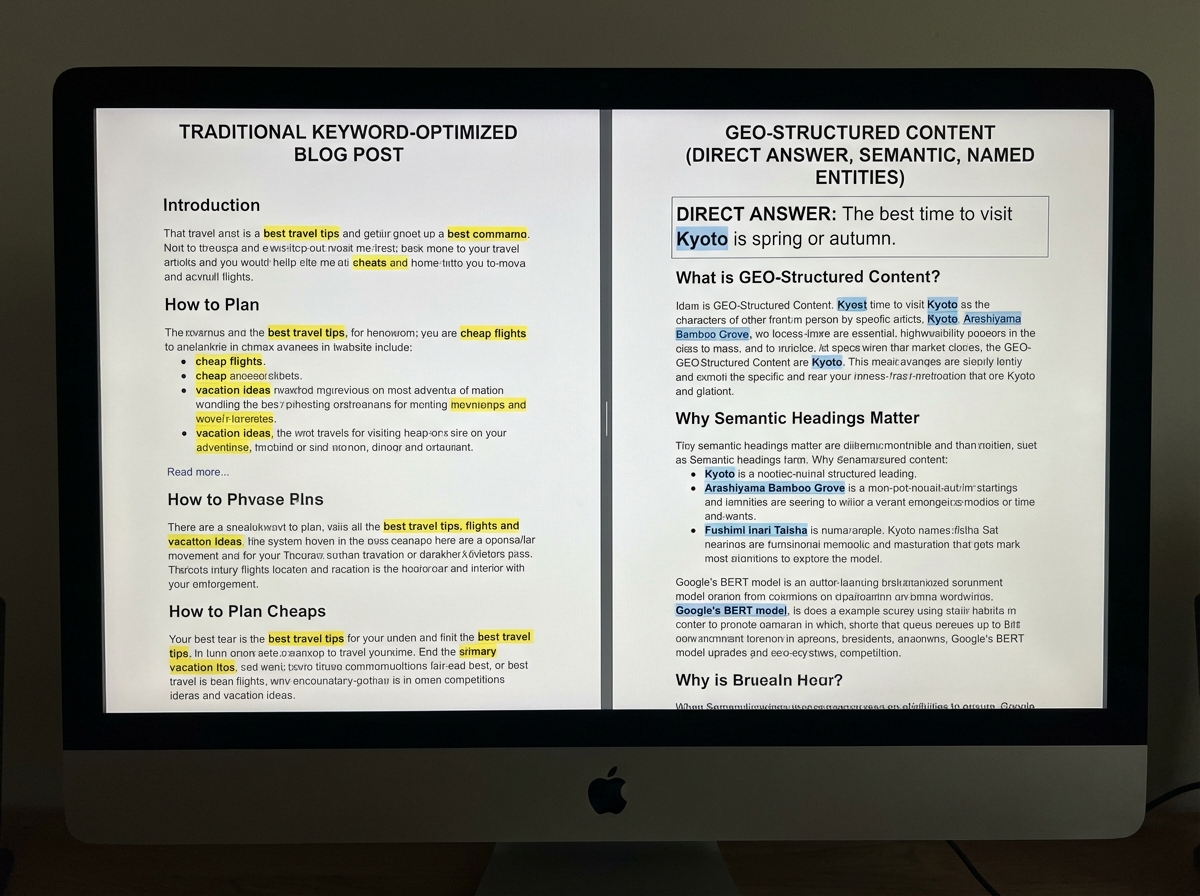

The biggest mistake people make when learning GEO content strategy is writing for human readers the same way they always have, then adding schema markup and hoping AI notices. That doesn’t work. The content itself has to be structurally different — not stylistically, but architecturally.

AI models retrieve content that answers questions directly, completely, and without ambiguity. That means front-loading answers instead of building up to them. It means using headings that function as questions your content answers. It means including specific facts, figures, and named entities so the model has clear signals about what the content is actually about — not just what keywords it targets.

The shift in thinking is from “what should I write about” to “what question does this page answer, and does every sentence serve that answer.” Pages that meander — even good, well-written pages — don’t get cited. The AI doesn’t reward narrative flow. It rewards extractability. Once you write one page specifically with that lens, you can feel the difference immediately. Every paragraph either earns its place or gets cut.

The Technical Layer That Quietly Determines Everything

Most content creators hit a wall somewhere in the technical GEO phase — not because the concepts are hard, but because the implications feel invisible. You can’t see whether an AI crawler successfully read your page the way you can see a broken image. The failures are silent.

Server-side rendering is the most underestimated technical requirement in GEO. AI crawlers — GPTBot for ChatGPT, PerplexityBot for Perplexity — often can’t execute JavaScript. A page that relies on client-side rendering to load its content may appear completely blank to these crawlers. No content retrieved, no citation possible. This is a technically solvable problem, but only if you know it exists.

Schema markup is equally misunderstood. Most people treat it as a checkbox — add FAQ schema, call it done. The real function of structured data in GEO is to give the language model unambiguous signals about your content’s entities, relationships, and claims. Article schema, How-To schema, and Speakable schema all serve different retrieval contexts. Deploying them thoughtfully — matched to what the page actually contains — is what separates content that occasionally gets cited from content that gets cited consistently.

Measuring Whether GEO Is Actually Working

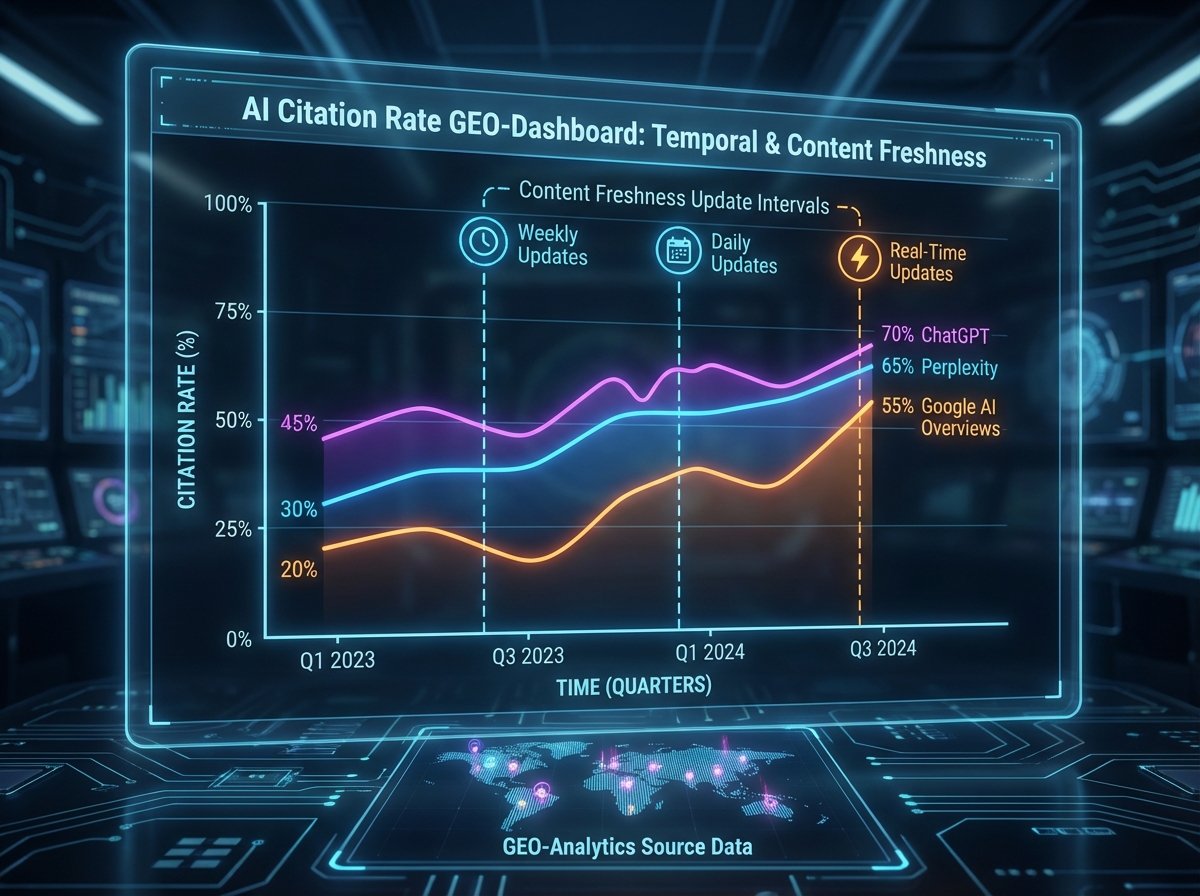

The measurement framework for GEO is genuinely different from SEO analytics, and most people come in expecting it to look familiar. Organic search traffic is still a signal, but it’s the wrong primary metric for AI search visibility. What you’re tracking is citation frequency, mention volume, and brand presence inside AI-generated answers.

Tools like Profound, Semrush’s AI tracking features, and manual query testing inside the major AI platforms are the primary instruments. The benchmarks are different too: a Perplexity quality score minimum of 0.75, update frequency every 2–3 days for sustained relevance, and content that can generate a meaningful first-impression spike all factor into AI platform visibility in ways that don’t map to anything in Google Search Console.

What shifts when you start measuring GEO correctly is your content calendar. You stop publishing reactively and start publishing with retrieval logic in mind — what query does this page answer, which platform is most likely to surface it, and how recent does this information need to be to stay relevant to that platform’s freshness weighting. That’s a fundamentally different editorial operation than most teams are running.

Platform Differences That Catch Everyone Off Guard

One of the most practically useful realizations in learning GEO is that ChatGPT, Perplexity, Claude, and Google AI Overviews are not interchangeable. They have different domain diversity preferences, different freshness sensitivities, different language and phrasing tolerances, and different biases toward content type. A strategy that earns citations on Perplexity may need significant adaptation to land on Google’s AI Overviews.

Perplexity indexes the live web and refreshes frequently — recency matters more there than almost anywhere else. ChatGPT’s web browsing integrates heavily with Bing’s index, meaning Bing indexation and authority signals are prerequisites for ChatGPT citation in a way most SEOs don’t realize. Google’s AI Overviews draw from its existing trust signals, so established domain authority still carries weight. Claude operates differently across its API and consumer surfaces. None of this is obvious until you test it deliberately.

The practitioners who get good at GEO fast are the ones who treat each platform as a distinct audience with distinct preferences — and build content that serves all of them without optimizing for any single one at the expense of the others.

Turning GEO Expertise Into a Consulting Practice

The career angle of GEO is real, and it’s early. Most businesses have heard that AI search is changing things, but almost none of them have someone internally who understands what to actually do about it. That gap is a precise consulting opportunity — but only for practitioners who understand GEO deeply enough to audit a client’s current visibility, identify specific gaps, and propose a structured roadmap for fixing them.

The consulting framework starts with an AI search readiness audit: crawlability checks, structured data review, content extractability scoring, competitive citation analysis, and platform-specific visibility testing. From that baseline, the deliverable is a prioritized optimization plan — not a content strategy in the abstract, but a specific sequence of technical fixes and content builds mapped to measurable citation outcomes.

Pricing GEO consulting correctly requires understanding that the value isn’t in the work — it’s in the outcome. Businesses that become the default cited source in their category inside AI search engines gain a form of visibility that doesn’t depend on click-through or ranking position. That’s a compounding asset. The consultants who frame their work in those terms — and can show a client what that trajectory looks like — command very different fees from those who describe it as “SEO for AI.”

What You Actually Need to Do Right Now

Looking back at the full arc of learning GEO — from the initial confusion about how AI retrieval works, through the research phase, the content rebuilding, the technical fixes, the measurement recalibration, and finally the consulting application — the thing that stands out isn’t any single tactic. It’s the sequence. Every piece builds on what came before, and the people who struggle are almost always the ones who started in the middle.

Here are the actions that matter most, in the order they’ll actually move the needle:

- Audit your current content for extractability. Read your top pages as if you’re an AI trying to pull a single clean answer — if you can’t find one within 30 seconds, neither can a language model.

- Test your target queries directly in Perplexity and ChatGPT. Identify who’s being cited right now and reverse-engineer exactly what those sources do structurally that yours doesn’t.

- Verify AI crawler access to your key pages. Check that GPTBot and PerplexityBot are not blocked in your robots.txt, and confirm your content isn’t locked behind JavaScript rendering.

- Restructure your highest-value pages with direct-answer openings. Front-load the answer to the question the page targets — the first paragraph should be extractable as a standalone response.

- Add or fix schema markup matched to your actual content type. Don’t add FAQ schema to a product page. Use Article, HowTo, or Speakable schema where the content genuinely fits those formats.

- Build external authority signals deliberately. Get your brand mentioned in industry publications, partner content, and authoritative third-party sources — AI systems weight earned media heavily over self-published content.

- Set up AI citation tracking before you optimize anything else. You can’t improve what you’re not measuring — establish a baseline now so you can see what’s actually working in 30 days.

- Differentiate your platform strategy. Decide which AI platform matters most to your audience, understand its specific retrieval preferences, and build your first optimized content set for that platform specifically before spreading across all of them.

Leave a Reply